Snapshot 15:’ “Approaches to determining the impact of digital finance programs” addresses one of the key questions of FiDA’s 16 Learning Themes. The FiDA Partnership synthesizes the digital finance community’s knowledge of these Learning Themes as “Snapshots” that cover topics at the client, institution, ecosystem, and impact levels.

Spoiler: Snapshot 15: “Approaches to determining the impact of digital finance programs,” is not the key to a cheap, fast impact method that works with any program. Blame complexity — and the digital finance community’s many innovations. If we replicated similar products with similar clients, in similar markets, then, maybe. But reality is more complicated than that.

Instead of one method to rule them all, Snapshot 15 explores different approaches to client impact measurement in order to expand the digital finance impact community and encourage the inclusion of various methods to advance the state of knowledge about the impacts of digital finance.

There is an impact conversation and we need you to join it

At FiDA we see impact assessment as a process more akin to a conversation than to the rendering of a verdict. One need not (and should not) rely on “capstone” documents shared only at the end of a successful project. Instead, impact conversations are broad, ongoing, multi-voiced dialogues about what is and is not working. Different study designs inform impact conversations in different ways at different stages.

But why should you, as a program manager or a product developer, pay attention to and share non-experimental research? The digital finance community needs to continue innovating to push the industry forward, and such innovation may be hindered if there is a two-year wait for the results of an impact study. We need to build based on the insights available. Individually, an impact insight may appear insignificant but collectively they are necessary pieces of this crucial conversation. See the Digital Finance Evidence Gap Map (EGM) for an illustration of the power of collective impact insights.

Impact insights from diverse sources are valuable and advance the community

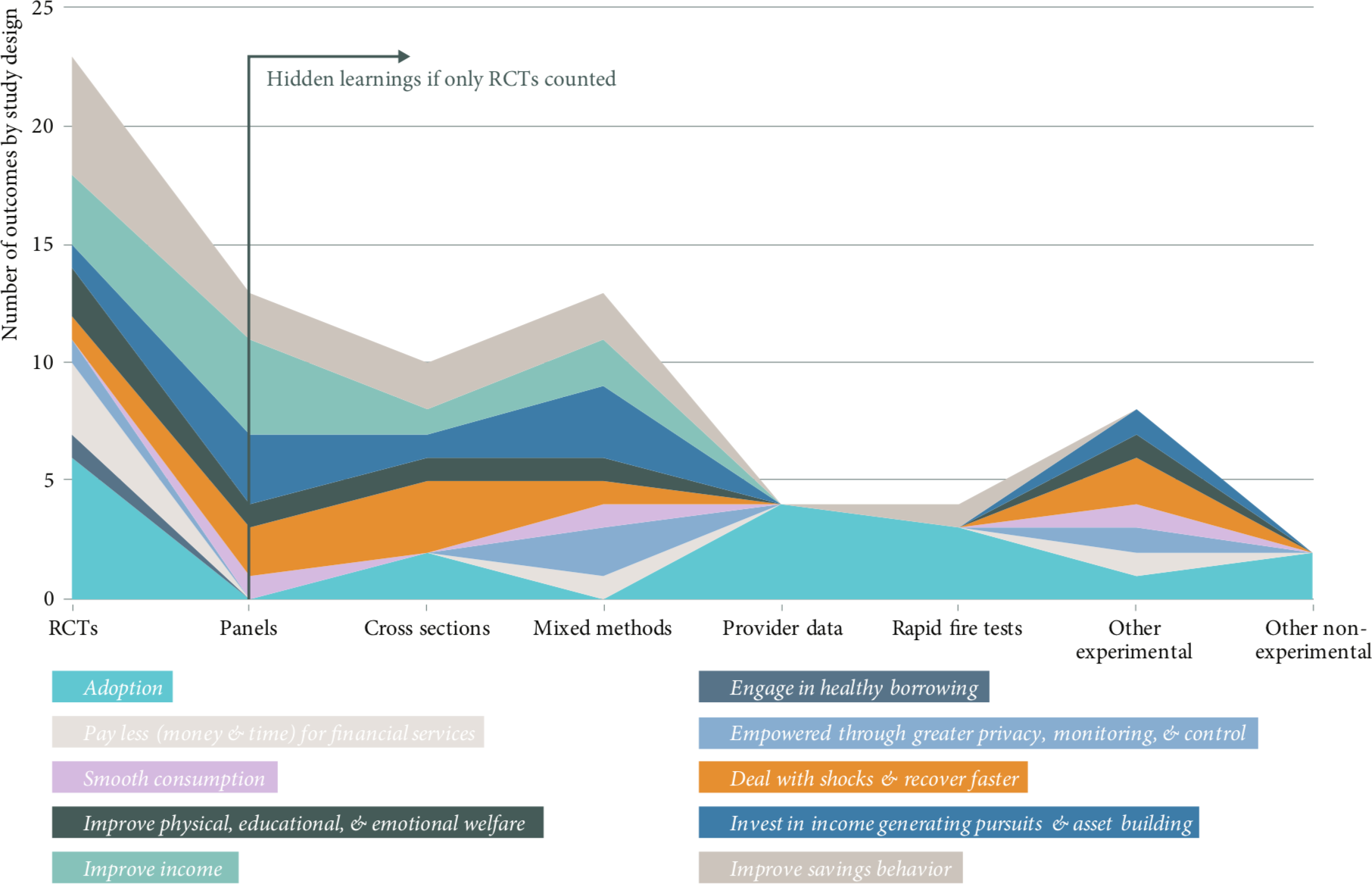

Certain experimental methods have been put on a pedestal. While experimental designs with control groups can produce powerful insights, the various setups of digital finance programs mean that experimental methods are not always an option. That is OK. Experimental methods are not the only way to gather impact insights. For instance, if the FiDA EGM only included Randomized Control Trials (RCTs), our opportunity to learn would be vastly reduced. Including studies that used an array of methods have added appreciably to the digital finance sector’s knowledge at a point in time where evidence is limited.

What approaches have been used to explore the impact of digital finance

In Snapshot 15 we describe a variety of approaches that have been used in digital finance impact research to get a sense of the possible approaches from which researchers and developers can draw. The methods we highlight represent primary approaches that can be coupled with other tools. For example, bolstering a panel with Most Significant Change stories to provide clients’ versions of impact. The evaluation toolbox is full of approaches wherein primary methods can be enhanced by other tools to confirm, refute, explain, or enrich the findings.

So what can you do to measure impact?

Invest in a strong theory of change and test it.

A theory of change describes how a program is expected to contribute to change and in which conditions it might do so. That is, “If we do X, Y will happen because…”. In Snapshot 15 we also describe the attributes of a robust theory of change. Impact research based on robust theories of change guides choices about when and how to measure outcomes. This simplifies decisions on the choice of tools in the research toolbox.

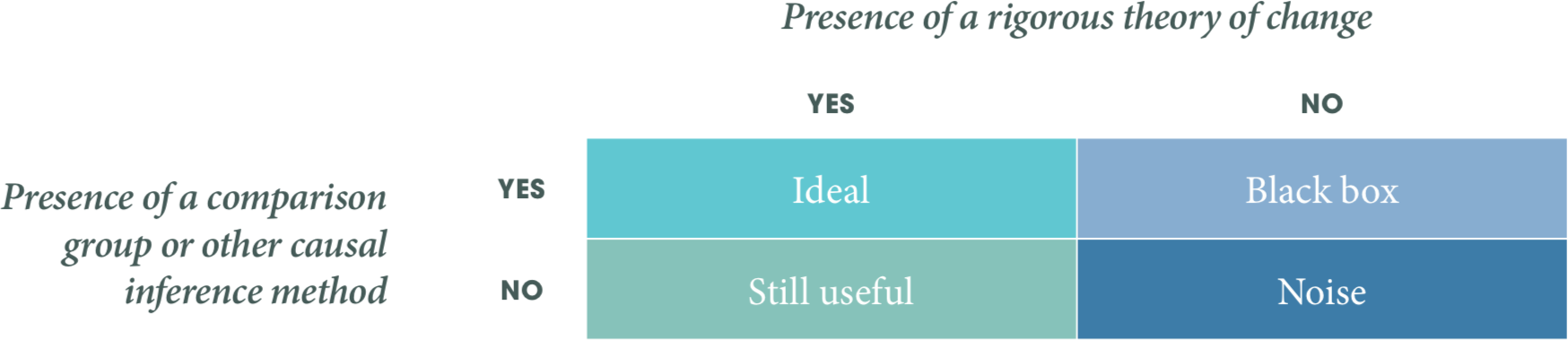

Look for comparison groups when you can—but it’s OK if you can’t find them

Strong theories of change coupled with comparison groups and/or other causal inference methods is an ideal standard. However, a strong theory is still useful in the absence of a comparison group.

Impact research should determine if a program contributed to an observed change. A comparison group is one of the many approaches to inferring causality. But even without comparison groups, you can connect the dots. Consider the way that justice systems establish causality beyond reasonable doubt. Theories are developed, information is gathered, and evidence is built to create a case for the initial theory and for alternative explanations. Evaluators have been inspired by this and we highlight alternative approaches to understanding causality.

You might never be certain but you can get close

In realist research there is no such thing as final truth or knowledge. Nonetheless, it is possible to work towards a closer understanding of whether, how, and why programs work, even if we can never attain absolute certainty or provide definitive proof. Moreover, to approach the truth, we must acknowledge not only what worked but also what did not work. Insights into the positive, negative, and null effects all serve to refine theories of change, the implications of which are broader than any single program.

To conclude, our best sources of evidence come from numerous methodologies in dialogue with each other. But we need the digital finance community to to lead with rigorous theories of change, find the comfortable research method, and join the impact conversation. To learn more about how you can add to the conversation, read Snapshot 15.